3D Point Cloud Technology Series ii - Dr. Yang Junchao

2021-08-03

3d data can often be represented in a variety of formats, including depth images, point clouds, grids, and volume grids. As a common representation format, point cloud representation retains the original geometric information in three-dimensional space without any discretization. As such, it is the preferred representation for many scenarios understanding related applications such as autonomous driving and robotics. In recent years, deep learning technology has become a research hotspot in computer vision, speech recognition, natural language processing, bioinformatics and other fields. However, deep learning of 3D point cloud still faces many challenges such as small scale, high dimension and unstructured data sets. On this basis, we detailed the progress of deep learning methods based on 3D point cloud data, including 3D shape classification, 3D object detection and tracking, and 3D point cloud segmentation. Deep learning on point clouds has been attracting increasing attention, especially in the last few years with the release of publicly available data sets such as ModelNet, ShapeNet, ScanNet, Semantic3D and KITTI Vision Benchmark Suite, These data sets further promote the deep learning of 3D point clouds.

Overview of 3D point cloud processing methods based on deep learning

3D point cloud shape classification

Usually, we first learn the embedding of each point, then use the aggregation method to extract the global shape embedding from the whole point cloud, and finally realize the classification through several fully connected layers. Based on the feature learning method at each point, the existing 3D shape classification can be divided into project-based network and point-based network. The project-based approach first projects an unstructured point cloud into an intermediate regular representation, and then achieves shape classification by establishing a good two-dimensional or three-dimensional convolution. In contrast, the point-based approach acts directly on the original point cloud without any voxelization or projection. The point-based approach does not introduce explicit loss of information and is gaining popularity.

• Project-based approach

Firstly, 3d objects are projected into multiple views, corresponding view features are extracted, and then these features are fused for accurate object recognition. How to aggregate multiple view characteristics into a distinct global representation is a key challenge. In addition, there are some volumetric representations of 3D point clouds.

• Point - -based network

Based on the network architecture used for the feature learning of each point, the approach can be divided into point-by-point MLP, convolutional mode, Graph based, data indexed based network, and other typical networks.

3D point cloud target detection and tracking

• 3D target detection

The task of 3D target detection is to accurately locate all the interested targets in a given scene. Similar to target detection in images, 3d target detection methods can be divided into two categories: region proposal-based methods and single shotmethods.

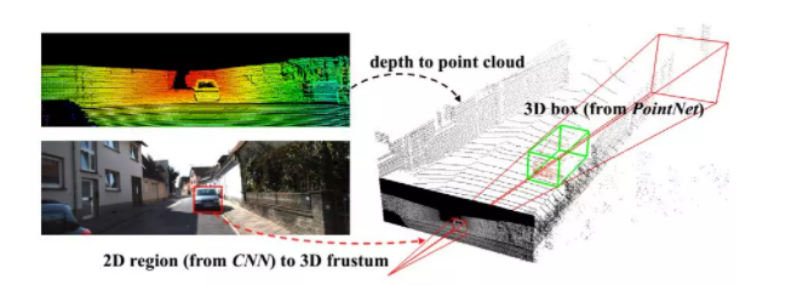

Region proposal-based methods: These methods first propose several areas (also called proposals) that may contain objects, and then extract the area features to determine the category label of each proposal. According to their proposal generation methods, these methods can be further divided into three categories: the multi-view-based approach, the segmentation based approach, and the Frustum-based approach.

FIG. 1 3D target detection

For single shot methods, category probability is directly predicted and three-dimensional bounding box of objects is regressed using single level network. These methods do not require a region proposal or a post

To deal with. As a result, they operate at high speeds, making them ideal for real-time applications. According to the type of input data, it can be divided into two types: BEV(projection) based method and point cloud based method.

• 3D target tracking

Given the position of an object in the first frame, the task of object tracking is to estimate its state in subsequent frames. Because 3d object tracking can make use of the rich geometric information in the point cloud, it is expected to overcome the disadvantages of 2d image tracking, such as occlusion, illumination and scale change. In addition to the above methods, there are some tracking algorithms based on optical flow. Similar to optical flow estimation in two-dimensional vision, there are many methods to learn useful information (such as THREE-DIMENSIONAL scene flow and spatial temporary information) from point cloud sequences.

000042.3 3D point cloud segmentation

3d point cloud segmentation requires understanding the global geometry and fine-grained details of each point. According to segmentation granularity, 3d point cloud segmentation methods can be divided into three categories: semantic segmentation (scene level), instance segmentation (object level) and component segmentation (component level).

• Semantic segmentation

Semantic segmentation is based on scene level and mainly includes project-based and point-based methods.

Segmentation algorithm for projection mode: It mainly includes multi-view Representation, Spherical Representation, Volumetric Representation, and PermutohedralLattice Representation, Hybrid Representation

For point-based segmentation algorithm: the point-based network directly acts on the irregular point cloud. However, the point cloud is disordered and unstructured, so it is not feasible to directly apply the standard CNN. To this end, the groundbreaking PointNet was proposed to learn to use the point-by-point characteristics of shared MLPS and the global characteristics using symmetric pool functions. Based on this idea, the later methods can be roughly divided into point MLP method, point convolution method, RNN-based method and graph-based method.

• Instance segmentation

Instance segmentation is more challenging than semantic segmentation because it requires more precise and refined reasoning with points. In particular, it is necessary to distinguish not only semantically different points, but semantically identical instances. In general, the existing methods can be divided into two categories: proposal-based methods and proposal-free methods.

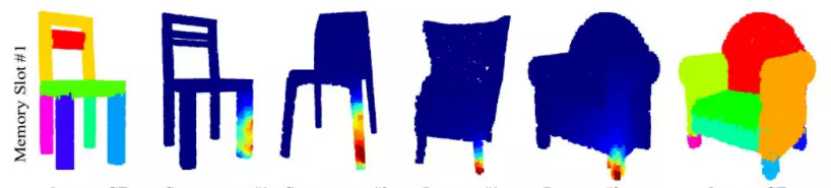

Based on the proposal method, the case segmentation problem is transformed into two sub-tasks: 3D object detection and instance mask prediction. However, the proposal-free method has no object detection module. On the contrary, such methods usually regard instance segmentation as a subsequent clustering step after semantic segmentation. In particular, most existing approaches are based on the assumption that points belonging to the same instance should have very similar characteristics. Therefore, these methods mainly focus on discriminating feature learning and point grouping.

FIG. 2 Segmentation of 3D point cloud instances

• Part Segmentation

There are two difficulties in 3d shape segmentation. First of all, the shape parts with the same semantic tags have large geometric variations and fuzziness. Secondly, the method should be robust to noise and sampling.

000043. The conclusion

3D point cloud has spatial coordinates, so it is widely used in construction, industry, automobile, game, criminal investigation and many other fields. Deep learning on 3D point clouds still faces several major challenges, such as the small size of data sets, high dimensions and the unstructured nature of 3D point clouds. In this paper, the recent 3D point cloud processing based on deep learning is reviewed, and the classical 3D point cloud processing methods and their applications in the industry will be further analyzed and expounded in detail.